If you're building stuff with large language models or AI agents, chances are you'll need web data. And that means writing a crawler, ideally something fast, flexible, and not a total pain to set up. Like, we probably don't want to spend countless hours trying to run a simple "hello world" app. That's where Crawl4AI web scraping comes in.

Crawl4AI is an open-source crawler made by devs, for devs; and if you're asking "what is Crawl4AI?", it's a tool built for control, speed, and structured output. It gives you control, speed, structured output, and enough room to do serious things without getting buried in boilerplate.

In this Crawl4AI tutorial, we'll walk through how to install it, spin up your first crawler, extract structured data, and use smart crawling that actually knows when to stop digging. No useless info, just working examples. Let's go!

Quick Answer: What is Crawl4AI?

Crawl4AI is an open-source, async Python library designed for AI-ready web crawling. It converts complex websites into clean Markdown or JSON, supporting advanced features like adaptive crawling, CSS-based data extraction, and automated PDF/screenshot generation. It is the ideal toolkit for powering RAG pipelines and LLM applications.

Installation

Prerequisites

Before proceeding to the main part of the article, let's make sure you have:

- Python 3.8+

pip(typically comes with Python)- A terminal

- A code editor (like VS Code, but anything works)

Crawl4AI installation

Crawl4AI can be installed as a Python package. There's also a Docker option, which we won't cover here but you can check it out in their official docs.

First, install the package:

pip install crawl4ai

Then run the setup command to configure the browser and check that everything is in order:

crawl4ai-setup

crawl4ai-doctor

This installs the default async version of Crawl4AI which uses Playwright under the hood.

Learn more about scraping with Playwright in our dedicated tutorial.

Troubleshooting Playwright (if needed)

The setup command should handle Playwright automatically. But if you run into errors, try installing it manually:

playwright install

If that doesn't work, use the more specific command:

python -m playwright install chromium

This method works better in some environments.

Writing your first crawler

In this example, you'll spin up an async crawler that hits Hacker News, pulls the page content, and writes it out as a Markdown file. Crawl4AI's async API and built-in browser support keep the code short and clear.

Here's a basic example:

import asyncio

from crawl4ai import (

AsyncWebCrawler,

BrowserConfig,

CrawlerRunConfig,

DefaultMarkdownGenerator,

PruningContentFilter,

CrawlResult

)

async def main():

# Set up the browser config

browser_config = BrowserConfig(

headless=True, # run without opening a browser window

verbose=True # print logs while crawling

)

# Launch the crawler with the given browser config

async with AsyncWebCrawler(config=browser_config) as crawler:

# Define how to process and clean the content

crawler_config = CrawlerRunConfig(

markdown_generator=DefaultMarkdownGenerator(

content_filter=PruningContentFilter() # removes boilerplate

)

)

# Run the crawler on the target URL

result: CrawlResult = await crawler.arun(

url="https://news.ycombinator.com", # Hacker News

config=crawler_config

)

# Save the markdown result to a file

with open("hacker_news.md", "w", encoding="utf-8") as f:

f.write(result.markdown.raw_markdown)

if __name__ == "__main__":

asyncio.run(main())

Sample output:

[INIT].... → Crawl4AI 0.7.1

[FETCH]... ↓ https://news.ycombinator.com | ✓ |

⏱: 1.43s

[SCRAPE].. ◆ https://news.ycombinator.com | ✓ |

⏱: 0.15s

[COMPLETE] ● https://news.ycombinator.com | ✓ |

⏱: 1.58s

What this code does

- You configure the browser via

BrowserConfig.headless=Trueruns Chrome in the background. AsyncWebCrawlerlaunches and manages the browser session for you.CrawlerRunConfigpicks how you want the output—in this case, a Markdown generator with a pruning filter.- Calling

crawler.arun(...)fetches the page, processes the content, and hands you the result. result.markdown.raw_markdownis your clean Markdown string, ready to save to a file.

Saving a full-page PDF and screenshot from a live webpage

Sometimes you don't want just the raw text — you want the whole page, as it looked. Whether it's a financial snapshot, product listing, or breaking news article, a full-page screenshot or PDF can come in handy. Crawl4AI makes this super easy, even for long or dynamic pages.

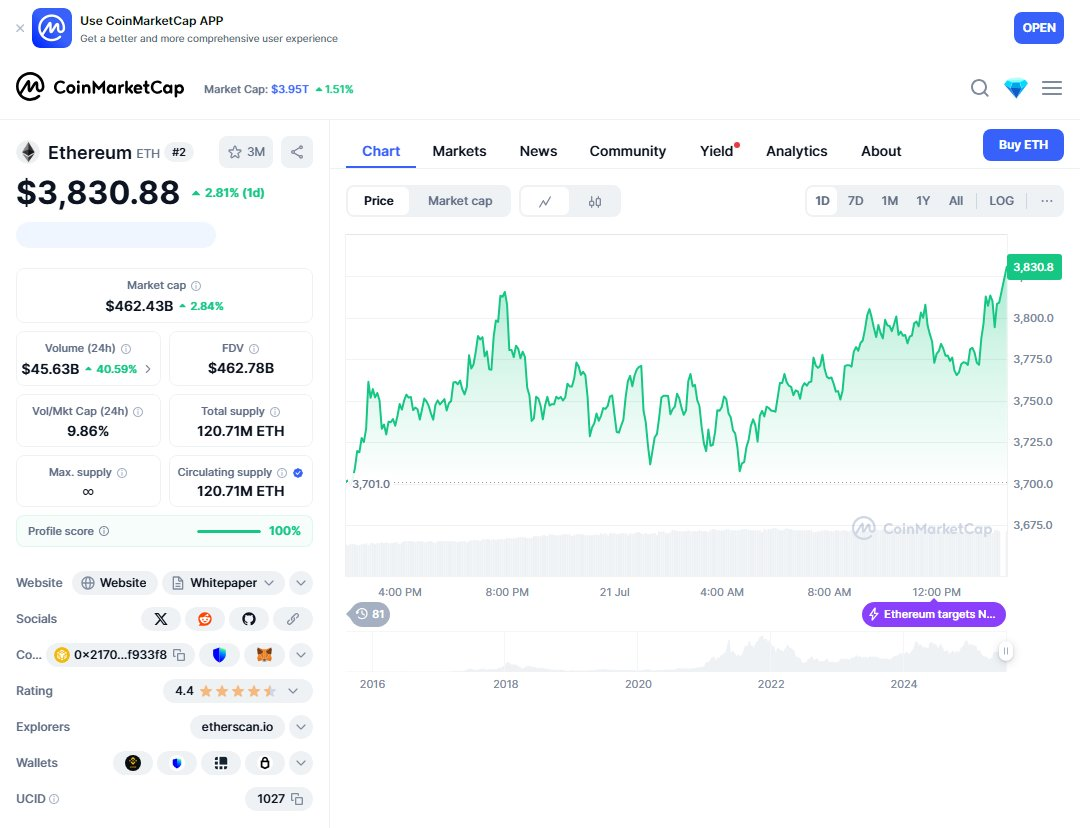

In this example, we'll hit the ETH-USD quote page on Coin Market Cap and save both a full-page PDF and a screenshot.

import os

import asyncio

from crawl4ai import AsyncWebCrawler, CacheMode, CrawlerRunConfig

# Adjust path if needed

__location__ = os.path.realpath(os.path.join(os.getcwd(), os.path.dirname(__file__)))

async def main():

async with AsyncWebCrawler() as crawler:

result = await crawler.arun(

url='https://coinmarketcap.com/currencies/ethereum/',

config=CrawlerRunConfig(

cache_mode=CacheMode.BYPASS,

pdf=True,

screenshot=True

)

)

if result.success:

# Save screenshot as PNG

if result.screenshot:

from base64 import b64decode

with open(os.path.join(__location__, "eth_usd_screenshot.png"), "wb") as f:

f.write(b64decode(result.screenshot))

# Save page as PDF

if result.pdf:

with open(os.path.join(__location__, "eth_usd_page.pdf"), "wb") as f:

f.write(result.pdf)

if __name__ == "__main__":

asyncio.run(main())

Instead of stitching together a bunch of viewport screenshots, Crawl4AI taps into the browser's native PDF rendering. It's cleaner, more reliable, and works even when the page scrolls for miles.

This trick works great for archiving pages, capturing dashboards, saving receipts, or grabbing content that changes frequently.

What this code does

- Opens the CoinMarketCap page for Ethereum

- Exports a full-page PDF

- Captures a screenshot of the page

- Saves both the PDF and PNG to your current working directory

Here's the result:

Check out tutorial on BrowserUse that covers how to use AI agents for scraping.

Adaptive crawling example

Adaptive crawling figures out how deep it needs to go based on relevance, so you don't end up fetching pages that don't matter. In this snippet, we start at the asyncio docs and look for “async await context managers coroutines.”

import asyncio

from crawl4ai import AsyncWebCrawler, AdaptiveCrawler, BrowserConfig

async def main():

browser_config = BrowserConfig(verbose=True)

async with AsyncWebCrawler(config=browser_config) as crawler:

adaptive = AdaptiveCrawler(crawler)

result = await adaptive.digest(

start_url="https://docs.python.org/3/library/asyncio.html",

query="async await context managers coroutines"

)

adaptive.print_stats(detailed=False)

# Show top 5 relevant pages

relevant_pages = adaptive.get_relevant_content(top_k=5)

for i, page in enumerate(relevant_pages, 1):

print(f"{i}. {page['url']}")

print(f" Score: {page['score']:.2%}")

snippet = (page['content'] or "")[:200].replace("\n", " ")

print(f" Preview: {snippet}...")

print(f"\nConfidence: {adaptive.confidence:.2%}")

print(f"Total pages crawled: {len(result.crawled_urls)}")

if __name__ == "__main__":

asyncio.run(main())

This is great if you need targeted data (like specific docs or tech articles) without spinning through every link on the site.

What this code does

- It kicks off at the URL you give and hunts for pages that match your query

- Once it's confident it has enough good content, it stops crawling

- It prints out a quick stats summary and the top hits it found

- And it just works out of the box; no extra config needed for basic use

Example output:

**Crawl Statistics**

Adaptive Crawl Stats - Query:

`async await context managers coroutines`

| Metric | Value |

|------------------|----------------|

| Pages Crawled | 4 |

| Unique Terms | 1218 |

| Total Terms | 13325 |

| Content Length | 111,339 chars |

| Pending Links | 100 |

| | |

| Confidence | 73.30% |

| Coverage | 100.00% |

| Consistency | 29.66% |

| Saturation | 81.34% |

| | |

| Is Sufficient? | Yes |

**Most Relevant Pages**

1. https://docs.python.org/3/library/asyncio-task.html

Relevance Score: 100.00%

Preview: [  ](https://www.python.org/) dev (3.15) pre (3.14) 3.13.5 3.12 3.11 3.10 3.9 3.8 3.7 3.6...

2. https://docs.python.org/3/library/asyncio.html

Relevance Score: 60.00%

Preview: [  ](https://www.python.org/) dev (3.15) pre (3.14) 3.13.5 3.12 3.11 3.10 3.9 3.8 3.7 3.6...

3. https://docs.python.org/3/library/asyncio-runner.html

Relevance Score: 60.00%

Preview: [  ](https://www.python.org/) dev (3.15) pre (3.14) 3.13.5 3.12 3.11 3.10 3.9 3.8 3.7 3.6...

4. https://docs.python.org/3/library/asyncio-api-index.html

Relevance Score: 60.00%

Preview: [  ](https://www.python.org/) dev (3.15) pre (3.14) 3.13.5 3.12 3.11 3.10 3.9 3.8 3.7 3.6...

**Final Confidence:** 73.30%

**Total Pages Crawled:** 4

**Knowledge Base Size:** 4 documents

**Information Sufficiency Check**

~ Moderate confidence – can answer basic questions

What's happening behind the scenes

When you call adaptive.digest(), here's the play‑by‑play:

- Fetch the first page and pull out its text into a little in‑memory “knowledge base.”

- Score every new link on that page by combining:

- relevance (term overlap or semantic match)

- novelty (is the content actually new?)

- authority (domain rank, URL depth, etc.)

- Crawl the top links (default

top_k_links), add their content to your knowledge base, and update three core metrics:- coverage: how much of your query terms/concepts you've seen

- consistency: how well the pages agree with each other

- saturation: how many fresh terms each new page adds

- Loop: keep picking, scoring, and crawling until one of these kicks in:

- your overall confidence (a blend of those metrics) passes

confidence_threshold - you hit

max_pages - each new page adds less than

min_gain_thresholdworth of info

- your overall confidence (a blend of those metrics) passes

Behind the scenes it's all async and incremental, so you get live stats and can even stream results as they arrive. So yeah, that's adaptive crawling in a nutshell: smart, focused, and tailored to exactly what you're looking for.

Scraping Amazon product results with JSON and CSS selectors

Crawl4AI lets you pull structured data straight out of complex pages using CSS selectors. No need for custom scrapers or messy parsing.

In this example, we search Amazon for "Samsung Galaxy Tab" and grab product info — titles, prices, ratings, reviews, all that good stuff.

import asyncio

import json

from crawl4ai import AsyncWebCrawler

from crawl4ai import JsonCssExtractionStrategy

from crawl4ai.async_configs import BrowserConfig, CrawlerRunConfig

async def extract_amazon_products():

browser_config = BrowserConfig(browser_type="chromium", headless=True)

crawler_config = CrawlerRunConfig(

extraction_strategy=JsonCssExtractionStrategy(

schema={

"name": "Amazon Product Search Results",

"baseSelector": "[data-component-type='s-search-result']",

"fields": [

{"name": "title", "selector": "a h2 span", "type": "text"},

{"name": "url", "selector": ".puisg-col-inner a", "type": "attribute", "attribute": "href"},

{"name": "image", "selector": ".s-image", "type": "attribute", "attribute": "src"},

{"name": "rating", "selector": ".a-icon-star-small .a-icon-alt", "type": "text"},

{"name": "reviews_count", "selector": "[data-csa-c-func-deps='aui-da-a-popover'] ~ span span", "type": "text"},

{"name": "price", "selector": ".a-price .a-offscreen", "type": "text"},

{"name": "original_price", "selector": ".a-price.a-text-price .a-offscreen", "type": "text"},

{"name": "sponsored", "selector": ".puis-sponsored-label-text", "type": "exists"},

{"name": "delivery_info", "selector": "[data-cy='delivery-recipe'] .a-color-base.a-text-bold", "type": "text", "multiple": True},

]

}

)

)

url = "https://www.amazon.com/s?k=Samsung+Galaxy+Tab"

async with AsyncWebCrawler(config=browser_config) as crawler:

result = await crawler.arun(url=url, config=crawler_config)

if result and result.extracted_content:

products = json.loads(result.extracted_content)

for product in products:

print("\nProduct Details:")

print(f"Title: {product.get('title')}")

print(f"Price: {product.get('price')}")

print(f"Original Price: {product.get('original_price')}")

print(f"Rating: {product.get('rating')}")

print(f"Reviews: {product.get('reviews_count')}")

print(f"Sponsored: {'Yes' if product.get('sponsored') else 'No'}")

if product.get("delivery_info"):

print(f"Delivery: {' '.join(product['delivery_info'])}")

print("-" * 80)

if __name__ == "__main__":

asyncio.run(extract_amazon_products())

The setup is simple: define what you want using CSS, and Crawl4AI turns the page into clean JSON. You can use this data directly in your pipeline or app.

Heads up: Amazon changes its layout often, and some parts are loaded dynamically. That's why this example uses a browser backend. If something breaks, tweak your selectors.

What this code does

- Spins up a browser with headless Chromium

- Tells Crawl4AI which fields to extract using CSS selectors

- Hits the Amazon search results page for "Samsung Galaxy Tab"

- Pulls out structured JSON with all the product data

- Prints out things like name, price, rating, and delivery info

Sample output:

Product Details:

Title: Galaxy Tab A9+ Tablet 11” 64GB Android Tablet, Big Screen, Quad Speakers, Upgraded Chipset, Multi Window Display, Slim, Light, Durable Design, US Version, 2024, Graphite

Price: $166.96

Original Price: $219.99

Rating: 4.4 out of 5 stars

Reviews: 14,720

Sponsored: No

Delivery: Tue, Jul 29

--------------------------------------------------------------------------------

Product Details:

Title: Galaxy Tab S10 FE 128GB WiFi Android Tablet, Large Display, Long Battery Life, Exynos 1580 Processor, IP68, S Pen for Note-Taking, US Version, 2 Yr Manufacturer Warranty, Silver

Price: $449.99

Original Price: $499.99

Rating: 4.6 out of 5 stars

Reviews: 129

Sponsored: No

Delivery: Tue, Jul 29

Extraction and chunking strategies

Crawl4AI gives you several built‑in strategies for pulling data and breaking it into chunks. Pick what fits your target content:

Extraction strategies

- RegexExtractionStrategy: quick and light. Ideal for emails, phone numbers, URLs, dates—anything you can match with a regex.

- JsonCssExtractionStrategy: point‑and‑shoot for well‑structured HTML. Define a base selector and field selectors, get back JSON.

- LLMExtractionStrategy: when you need smarts—complex layouts, nested data, or free‑form content. Uses an LLM to parse and structure data.

- CosineStrategy: clusters similar content and filters by semantic similarity. Great for finding topic‑specific text or grouping related sections.

Chunking strategies

- RegexChunking: splits on blank lines or custom patterns.

- SlidingWindowChunking: overlapping windows of text—handy for long docs where context matters.

- OverlappingWindowChunking: fixed‑size chunks with overlap—balances breadth and detail.

Choosing the right approach

- Need simple patterns? Go regex.

- HTML with consistent markup? Use CSS extraction.

- Unstructured or deeply nested data? LLM to the rescue.

- Want to group or filter by meaning? Try cosine clustering.

And for big blobs of text, layer on a chunking strategy so your downstream models or processors don’t choke on huge inputs. Mix and match: extract structure with CSS, then run regex or LLM on the chunks you care about.

Crawling multiple pages with deep crawling

So far we've just grabbed single pages. But what if you want to go deeper — like follow links and map out a whole section of a site?

Crawl4AI has deep crawling built-in. It uses strategies like BFS (breadth-first search), and you can tune it with options like max_depth to control how far it goes. By default, it sticks to internal links so you don't wander off-site.

Here's a basic example: we start at the Crawl4AI docs homepage and follow links up to two levels deep.

import asyncio

from crawl4ai import AsyncWebCrawler, CrawlerRunConfig

from crawl4ai.deep_crawling import BFSDeepCrawlStrategy

from crawl4ai.content_scraping_strategy import LXMLWebScrapingStrategy

async def main():

config = CrawlerRunConfig(

deep_crawl_strategy=BFSDeepCrawlStrategy(

max_depth=2, # crawl 2 levels deep

include_external=False # stay inside the same domain

),

scraping_strategy=LXMLWebScrapingStrategy(),

verbose=True

)

async with AsyncWebCrawler() as crawler:

results = await crawler.arun(

url="https://docs.crawl4ai.com", config=config

)

print(f"Crawled {len(results)} pages")

for page in results[:5]: # show first few URLs

print("→", page.url)

if __name__ == "__main__":

asyncio.run(main())

What this code does

- Starts crawling from

https://docs.crawl4ai.com - Follows internal links up to depth 2

- Uses LXML (fast and lightweight) to pull content from each page

- Returns a list of all visited pages with content

Want to go deeper? Just bump up max_depth. Want to follow links to other domains too? Set include_external=True.

This is handy for crawling full sites, making search indexes, or giving your AI agent a proper knowledge base.

What's happening behind the scenes

Deep crawling in Crawl4AI is powered by a few core components you can mix and match:

Strategy

- BFS (breadth‑first): explores all links at one level before going deeper

- DFS (depth‑first): dives down a branch fully, then backtracks

- BestFirst: scores links (e.g. with keyword or custom scorers) and visits the highest‑scoring ones first

Filters

UseFilterChainto include or exclude URLs by pattern, domain, content type, SEO score, or content relevance.Scorers

Plug in a scorer (likeKeywordRelevanceScorer) to rank links by relevance, novelty, or authority before crawling them.Control knobs

max_depthlimits how many levels you gomax_pagescaps the total pages crawledinclude_externaldecides whether to follow off‑domain linksscore_thresholdskips low‑scoring URLs

Streaming vs non‑streaming

- Non‑streaming waits for the full crawl to finish, then returns everything

- Streaming yields pages as they're found, so you can process early results immediately

Metadata on each page

Results include crawl depth, score, and any custom metadata so you can inspect exactly how and why each link was chosen.

By tweaking these pieces (strategy, filters, scorers, and limits) you get complete control over how deeply and where your crawler goes, making it easy to map a whole site or zero in on the content you actually care about.

Want easier scraping? Try ScrapingBee's AI-powered API!

Don't want to mess with browsers, selectors, or anti-bot tricks? Just want the data? ScrapingBee might be your thing.

It offers an AI-powered scraping API that takes care of all the annoying parts — rotating IPs, JavaScript rendering, changing layouts, captchas — and just gives you clean, structured JSON. You tell it what you want in plain English, and boom, it scrapes it for you.

Why it's nice

- No setup or infra — it all runs on their side

- AI figures out what to extract (no CSS selectors required)

- Works even on sites that hate scrapers (Amazon, LinkedIn, etc.)

- Output comes as clean JSON you can plug into anything

- Great for scraping e-commerce listings, contact info, or summarizing articles

You can grab a free trial here — no credit card needed.

Example: Scrape Amazon products with one AI prompt

# pip install scrapingbee

from scrapingbee import ScrapingBeeClient

import json

client = ScrapingBeeClient(api_key='SIGN_UP_TO_GET_YOUR_FREE_API_KEY')

response = client.get(

'https://www.amazon.com/s?k=dslr+camera',

params={

'ai_query': 'Return a list of products and their prices and a link to the product page',

'ai_extract_rules': json.dumps({

"product name": {

'type': 'list',

'description': 'the full name of the product verbatim from the page',

},

"product price": {

'type': 'number',

'description': 'price of the product',

},

"link to product page": {

'type': 'string',

'description': 'the url that links to the products page',

}

})

}

)

print('Response HTTP Status Code: ', response.status_code)

print('Response HTTP Response Body: ', response.content)

Key Features of the Crawl4AI Web Scraping Tool

The Crawl4AI web scraping tool is built with modern AI workflows in mind, which means it’s not just about grabbing HTML — it’s about getting clean, structured, and useful data fast. Here are the key features that make the Crawl4AI scraper stand out.

Asynchronous Crawling for Speed

Crawl4AI python uses an asynchronous architecture, which means it can crawl multiple pages at the same time instead of going one by one. This makes it significantly faster and more efficient for large-scale scraping tasks or AI pipelines.

Instead of waiting for each request to finish, it handles concurrency out of the box, so you can scale without rewriting your logic.

Structured output for AI workflows

One of the biggest advantages of the Crawl4AI web scraping tool is its ability to generate structured output like JSON, Markdown, or cleaned HTML.

This is especially useful when you're working with LLMs, RAG systems, or any pipeline where raw HTML would just create extra work. You get data that’s already usable.

Smart (adaptive) crawling

Unlike traditional scrapers that just follow links blindly, the Crawl4AI scraper can adapt its behavior based on the content it finds. It can decide when enough data has been collected and stop crawling automatically.

That means less noise, fewer wasted requests, and cleaner datasets.

Proxy support and rotation

Crawl4AI python includes built-in proxy support with rotation strategies, so you can distribute requests across multiple IPs and avoid blocks.

You can configure proxies directly in code, rotate them per request, and handle authentication without extra tooling.

Anti-bot bypass mechanisms

Modern websites don’t like scrapers — Crawl4AI deals with that using stealth mode, browser fingerprinting tweaks, and even “undetected browser” setups for tougher targets.

It can simulate real user behavior (like scrolling and interaction), helping you bypass common protections like Cloudflare or bot detection systems.

JavaScript rendering and real browser control

Since Crawl4AI runs on top of a browser automation stack, it can handle JavaScript-heavy websites, dynamic content, and interactive pages without hacks.

You also get control over sessions, headers, cookies, and browser behavior — basically everything you need for real-world scraping.

👉 If you want to compare it with other tools, check out this guide to the best AI web scrapers

Overall, the Crawl4AI web scraping tool is less about “scraping pages” and more about building reliable data pipelines for AI systems.

Crawl4AI web scraping: FAQs

What is Crawl4AI and why is it useful for LLMs?

Crawl4AI web scraping tool is designed to extract clean, structured data from websites. As a Crawl4AI web scraper, it’s useful for LLMs because it delivers ready-to-use content (like JSON or Markdown), reducing preprocessing and making it ideal for RAG and AI pipelines.

How does Crawl4AI handle dynamic content rendered with JavaScript?

Crawl4AI uses real browser automation to render JavaScript-heavy pages, allowing it to extract fully loaded content just like a user would see it. For more details, check out this guide on Web scraping dynamic content.

Does Crawl4AI offer features to bypass bot detection?

Yes, Crawl4AI includes stealth features like browser fingerprinting control, proxy support, and behavior simulation to reduce blocking risks. Learn more about techniques in Web scraping without getting blocked.

How does the "Extraction Strategy" work in Crawl4AI?

The Extraction Strategy in Crawl4AI defines how data is pulled from a page. You can customize rules to extract specific elements, structure output, and filter noise, making the Crawl4AI web scraper flexible for different use cases.

Is Crawl4AI suitable for large-scale crawling tasks?

Yes, Crawl4AI python supports asynchronous crawling, making Crawl4AI web scraping efficient for large-scale tasks. It can handle multiple requests concurrently, improving speed and scalability. See more on Async scraping in Python.

Conclusion

Crawl4AI web scraping is your go-to when you need full control over web crawling, from basic page grabs to smart, adaptive crawls that know when to stop. You've learned how to install it, spin up your first crawler, extract structured data, grab PDFs or screenshots, and run targeted crawls without over-fetching.

If you'd rather skip the setup and let someone else handle browser infrastructure, proxies, and anti-bot defenses, give ScrapingBee's API a try. Ready to get started? Sign up using the button at the top right of this page to get 1,000 free API calls—more than enough to test your workflow and scrape hundreds of pages.

Use Crawl4AI for maximum flexibility, or ScrapingBee for zero-config speed. Or mix and match. Happy crawling!

Before you go, check out these related reads:

Ilya is an IT tutor and author, web developer, and ex-Microsoft/Cisco specialist. His primary programming languages are Ruby, JavaScript, Python, and Elixir. He enjoys coding, teaching people and learning new things. In his free time he writes educational posts, participates in OpenSource projects, tweets, goes in for sports and plays music.